Deep Convolutional Neural Networks for Texture Classification

Autumn 2016

Master Diploma

Project: 00321

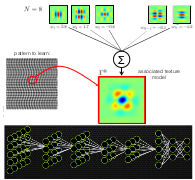

Texture classification has received an increased interest during the past 15 years in the domain of medical image analysis for personalized medicine. Most existing methods rely on extracting handcrafted image features (e.g., filterbanks, local binary patterns (LBP), gray-level co-occurrence matrices) and further building of a multivariate model to predict the texture classes. The use of deep convolutional neural networks (CNN) for object recognition in computer vision has shown to provide excellent results in many applications. Deep CNNs learn multiple filters in each convolutional layer of a deep neural network architecture using backpropagation weight updates. A major drawback of the latter is the requirement of large amounts of training data and computational time to learn all pixel weights (i.e., free parameters) of the filters. Currently, the use of deep CNNs for texture analysis is scarce.

In this project, the student will first use deep CNNs for texture analysis and evaluate the performance using the Outex texture database. This performance can be compared with existing methods based on LBPs and learned steerable wavelet representations. Then he will develop a CNN using filters obtained from linear combinations of Riesz kernels at each layer. The outputs (i.e., feature maps) of the convolutional layers will contain the responses of each linear combination of the Riesz components. The resulting CNN will inherit from the desirable properties of Riesz filterbanks (rotation, scale, translation invariance and steerablity) and will require a much smaller number of free parameters to estimate when compared to classical deep CNNs.

- Supervisors

- Adrien Depeursinge, adrien.depeursinge@epfl.ch, 021 693 5115, BM 4141

- Michael Unser, michael.unser@epfl.ch, 021 693 51 75, BM 4.136