Investigating optimization methods for generalized lasso

2022

Master Semester Project

Project: 00421

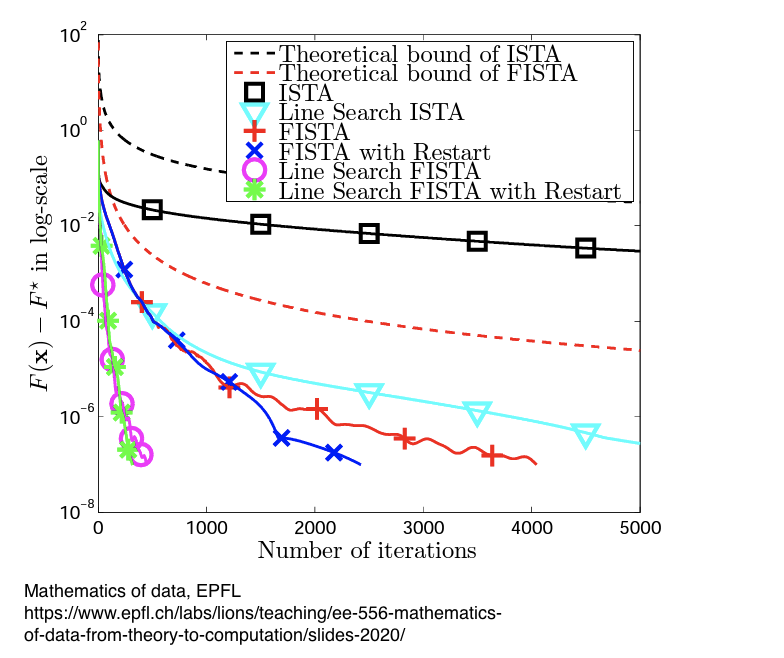

Nowadays machine learning is ubiquitous in various fields of science and technology. An inseparable part of it is optimization, as almost every learning task is formalized as an optimization problem. The simplest optimization problem isminimizing a convex error like the mean square error defined over a set of data samples. Usually, a regularizer is also added to the learning problem to avoid overfitting. In this project, we focus on the generalized lasso problem, in which the regularizer is the l1 norm applied to a linear transformation of the unknown variable. We want to investigate and compare the convergence speed of all current algorithms to solve the problem. The project will focus on the case where the linear transformation has a sparse matrix representation that promotes continuous and piecewise linear functions solution with few linear regions. The student should be familiar with optimization and Python.

- Supervisors

- Alexis Goujon, alexis.goujon@epfl.ch, BM 4.139

- Mehrsa Pourya, mehrsa.pourya@epfl.ch, BM 4.139

- Michael Unser, michael.unser@epfl.ch, 021 693 51 75, BM 4.136